Before understanding about Cache Miss Attack, let’s talk a little about Cache.

What exactly is Cache?

- A cache (pronounced “cash”) is a hardware or software component used in computer systems to store frequently accessed data or instructions, making it quickly accessible to the CPU or other processing units.

- The primary purpose of a cache is to reduce the time it takes to retrieve data from the main memory (RAM) or storage devices, as accessing data from these slower sources can be relatively time-consuming compared to accessing data from a cache.

Locality of Reference

- Caches work on the principle of exploiting the locality of reference exhibited by most programs and applications.

- Locality of reference refers to the tendency of programs to access a relatively small portion of their memory space frequently and repeatedly for a short period of time.

There are two common types of caches:

- CPU Cache:

→ The CPU cache is a small, high-speed memory integrated into the CPU itself or located very close to it.

→ It stores copies of frequently accessed data and instructions from the main memory.

→ When the CPU needs to read or write data, it first checks the cache. If the data is present in the cache (cache hit), it can be retrieved or modified much faster than fetching it from the main memory (cache miss).

→ CPU caches typically have multiple levels, such as L1, L2, and L3 caches, each with increasing size and farther from the CPU cores. - Disk Cache:

→ It is a software-based cache used to accelerate data access from slower storage devices like hard disk drives (HDDs) or solid-state drives (SSDs).

→ It temporarily stores frequently read data from the disk in a faster memory, such as RAM or SSD, to reduce the time required to access that data again.

→ It helps improve the overall system performance by reducing the bottleneck caused by slower storage devices.

What is a Cache Hit?

- A cache hit occurs when the data or instruction requested by a processor is found in the cache memory during a memory access operation.

- In other words, it means that the processor has successfully located the required information in the cache without the need to access the slower main memory or external storage devices.

What is a Cache Miss?

- A cache miss occurs when the data or instruction requested by a processor is not found in the cache during a memory access operation.

- In other words, it means that the processor attempted to access data that was not already stored in the cache, requiring the CPU to fetch the data from the slower main memory or external storage devices.

What is a “Cache Miss Attack”?

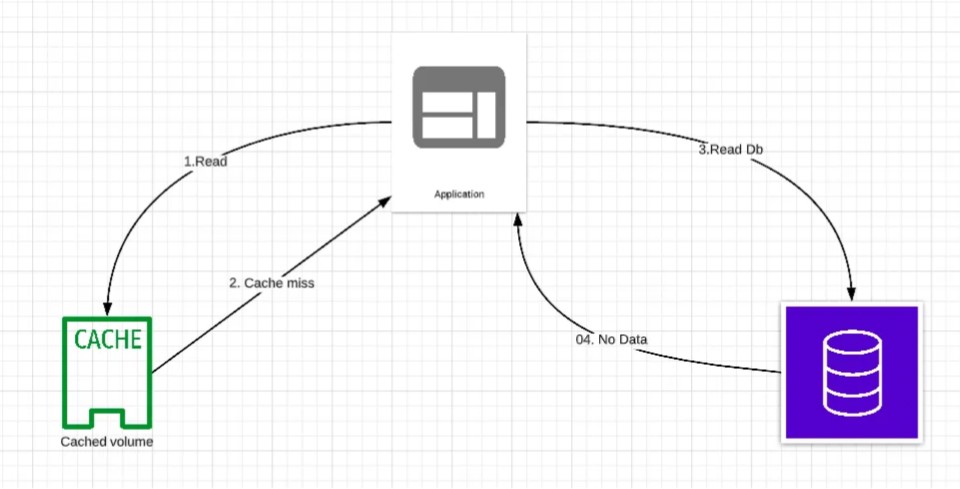

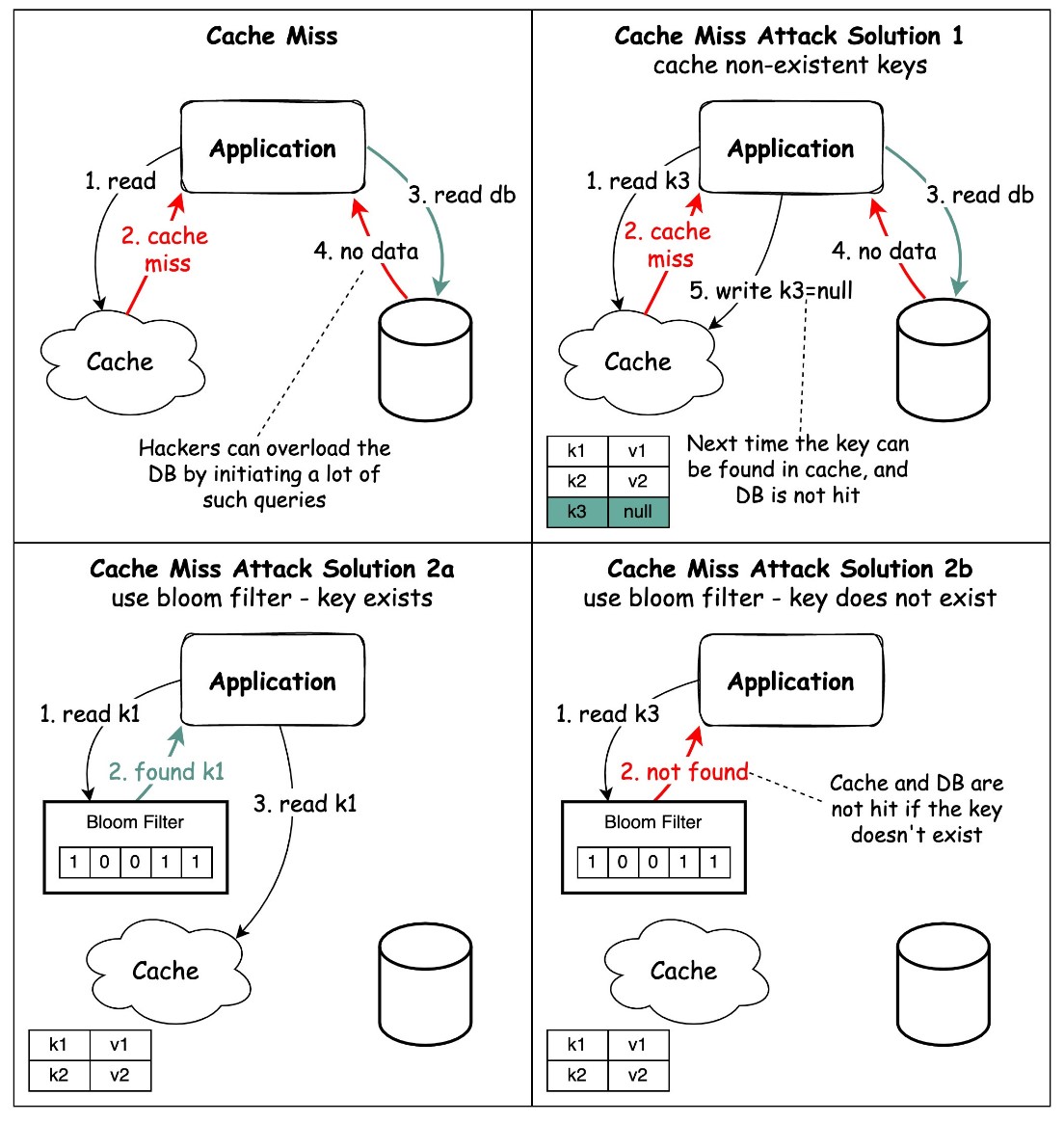

Let’s consider a scenario where a user is trying to access some data from an application and the data to be fetched doesn’t exist in the database and the data isn’t present in the cache either.

So, what’s going to happen in this case?

- All the user requests will hit the database eventually, defeating the purpose of using a cache.

- A malicious user submits some queries, is able to understand the pattern when the cache is being missed and then fires a lot of such queries, thus overloading the database.

How can it be avoided?

- We can design the APIs such that the users won’t be able to figure out of their requests are a Cache-Hit or a Cache-Miss.

- If we have cache keys with null values, we should set a Short Time-To-Live (TTL) for such keys.

- Use a Web Application Firewall (WAF) to detect and block malicious requests.

- Cache the non-existent keys, so that the next time database won’t be hit because key can be found in the cache. However, this should be done with caution as attacker can inject a malicious payload in the GET request, and the response is cached.

- Use Bloom Filter:

→ It is a space-efficient probabilistic data structure used to test whether an element is a member of a set.

→ It can be used to check if a key exists in a set, such as a cache, without actually retrieving the key.

→ This can prevent cache miss attacks by making it difficult for an attacker to determine whether a specific key is in the cache.

→ Bloom filters work by hashing the key and setting the corresponding bits in a bit array.

→ When checking if a key exists, the same hash function is applied to the key, and the corresponding bits in the bit array are checked. If all the bits are set, the key is probably in the set, but there is a small chance of a false positive.

→ If the key exists, the request first goes to the cache and then queries the database if needed.

→ If the key does not exist in the data set, it means the key is not present in the cache or the database.

Conclusion

It’s essential for software developers and system architects to be aware of the risks associated with cache miss attacks and design their systems with security in mind, especially when dealing with sensitive data. Additionally, operating system and hardware manufacturers continually work on mitigating these vulnerabilities and releasing patches to ensure the security of their systems.

Is this the same like the so called ‘caching penetration’ or ‘cache poisoning’ ?